AI Security Isn't Optional

Why every security team needs ML capabilities now, not later—and how to start.

If you're still doing security without AI, you're bringing a knife to a drone fight.

Not because AI is magic. Because attackers are already using it and the volume of data we need to analyze has exploded beyond human capacity.

The scale problem

Modern cloud environments generate terabytes of security telemetry daily. Logs, network flows, authentication events, API calls—it never stops. A human analyst can maybe investigate 50 alerts in a shift. Your environment is generating 50,000.

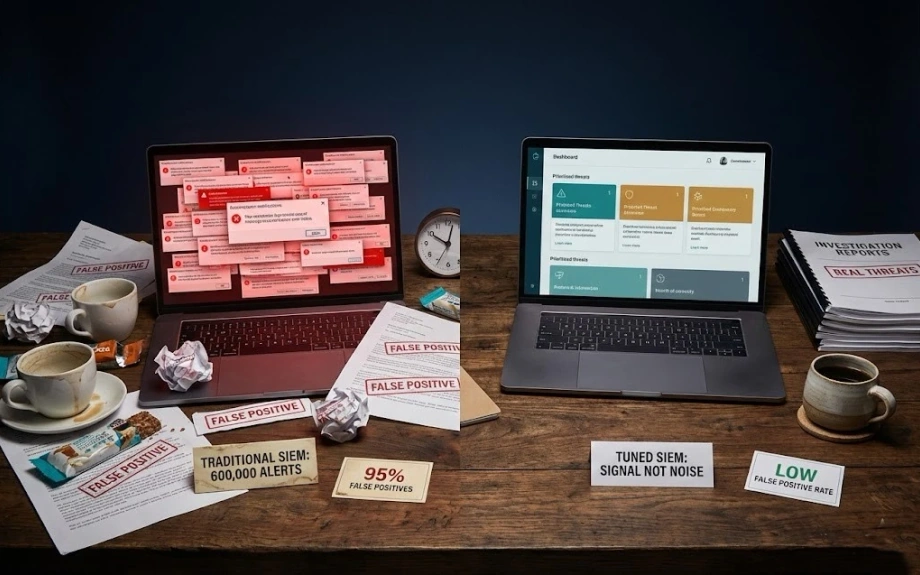

Traditional SIEM rules can't keep up. They're static. They look for known patterns. They generate alerts for

everything because they're better at detecting "something happened" than "something bad happened."

Result- alert fatigue. 95% false positive rates. Real threats buried in noise. Analysts burned out from chasing ghosts.

Where AI actually helps

Behavioral baselines. ML models learn what normal looks like for every user, every service, every pattern. Then flag deviations. Not "this action is bad" but "this action is weird for this context."

Example: User logs in from a new country. Is that an attack? Depends. Sales team traveling? Normal. Engineer who never leaves their home office? Worth investigating. Rules can't capture that nuance. ML can.

Correlation at scale. Finding multi-stage attacks that span hours or days across thousands of events. Human analysts can't hold that much context in their heads. ML can process the entire timeline and spot patterns.

False positive reduction. This is the killer app. Train models on your historical incidents—what turned out to be real versus false alarms. The system learns your environment's quirks and stops alerting on the noise.

What AI doesn't do

Replace human judgment. AI is great at "this is anomalous." Humans are still needed for "this is dangerous and here's what we should do about it."

Work out of the box. Every environment is different. Generic ML models trained on someone else's data won't understand your normal. You need to train on your data, tune for your context.

Fix bad security fundamentals. AI won't save you if you don't have basic logging, your IAM is a mess, or you're not patching. It amplifies what you're already doing—garbage in, garbage out.

How to actually start

Don't try to build everything from scratch. Use platforms that already have ML baked in—Chronicle, Splunk, Datadog. They've solved the hard problems. You focus on tuning for your environment.

Start with one use case. Pick your biggest pain point. Maybe it's impossible to detect compromised accounts. Or you're drowning in vulnerability scanner alerts. Focus there first.

Get your data house in order. ML needs clean, consistent data. If your logs are a mess, fix that first. Standardize formats. Enrich context. Make sure you're actually collecting what matters.

Iterate fast. Deploy a model. See what it catches and what it misses. Tune. Repeat. ML isn't set-and-forget, it's continuous improvement.

Real example from my work

Built a user behavior analytics system using Chronicle. Trained it on 6 months of authentication data. It learned patterns like: this engineer works these hours, from these locations, accessing these systems.

2 weeks after deployment, it flagged a login that looked totally normal—valid credentials, VPN, during

business hours. But the behavior was off. Different typing speed. Different mouse movement patterns.

Accessing systems in a different order than usual.

Turned out the account was compromised. Attacker had the credentials but couldn't perfectly mimic human behavior patterns. Traditional rules would have missed it completely. The ML model caught it because it understood that user's specific normal.

The uncomfortable truth

Security teams without AI capabilities are already behind. Not "might fall behind someday"—already there. The attack surface is too big, the data volume too high, the attacker tools too sophisticated.

You don't need a PhD in machine learning. You need to understand what ML is good at, where to apply it, and how to operate it in production.

Start now. Start small. But start. Because the gap between AI-enabled security and traditional security is only getting wider.

And attackers aren't waiting for you to catch up.

LATEST POSTS

Practical security engineering—what works, what doesn't, and why your SIEM is lying to you.

© RAJ PATHAK

View all posts +