SIEM Without the Pain

How to build security monitoring that actually works—without drowning your team.

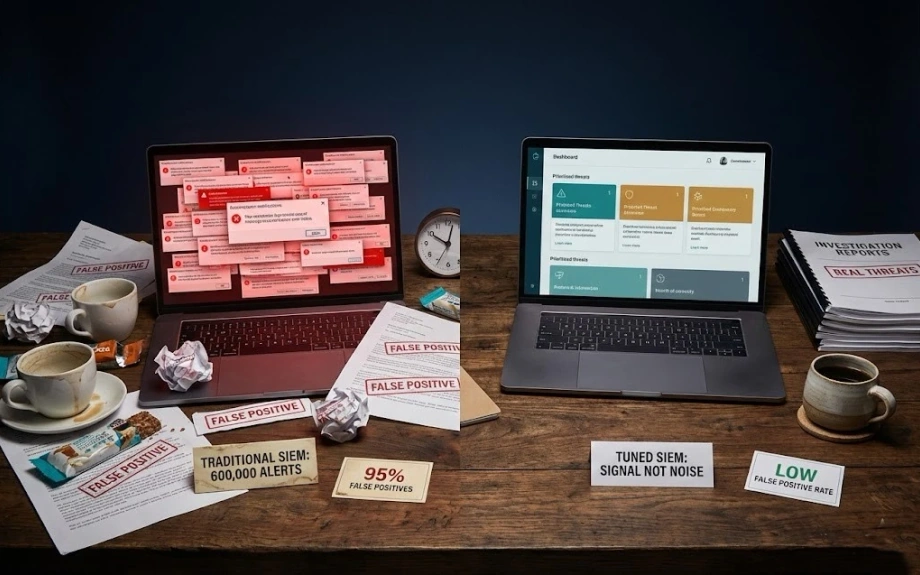

SIEM projects have a reputation for being expensive failures. 6-figure licenses. Six-month implementations. 6-hundred-thousand alerts nobody reads.

Doesn't have to be that way. Here's how to build security monitoring that your team will actually use.

Why traditional SIEM fails

Bought because of compliance requirements, not because anyone wanted better security visibility. So it

becomes a checkbox exercise instead of an operational tool.

Implemented by vendors who don't understand your environment. Generic rules that worked for someone else but generate nonsense in your context. Alert fatigue sets in within weeks.

Operated by overworked analysts who spend their days acknowledging false positives and never get time to hunt actual threats. The SIEM becomes a source of tickets, not insights.

Start with why

What problem are you actually trying to solve? "We need a SIEM" isn't a problem. These are problems-

We can't tell if someone's compromising accounts. We don't know if data is being exfiltrated. Compliance wants proof we're monitoring security events. We need to detect ransomware before it spreads.

Pick one problem. Build monitoring that solves that problem. Prove value. Then expand.

What to actually monitor

You don't need to ingest everything. Focus on what matters-

Authentication events. Success and failure. From everywhere—cloud, apps, VPN, everything. This is how you detect compromised accounts and insider threats.

Cloud control plane logs. AWS CloudTrail, GCP Cloud Logging, Azure Activity Logs. Someone creating

resources, changing IAM, disabling security controls—you need to know.

Network flows. Not every packet, just metadata—who's talking to who, how much data, what ports. Enough to spot data exfiltration and lateral movement.

Critical application logs. Authentication, authorization, sensitive operations. What "sensitive" means depends on your business.

Detection that works

Forget signature-based detection. Attackers change their tools faster than you can write rules.

Focus on behavioral detection-

Anomalous authentication—logins from unusual locations, unusual times, unusual patterns. Failed login spikes. Successful login after many failures.

Privilege changes—anyone getting admin access, especially outside change windows. Service accounts getting permissions they shouldn't have.

Data movement—unusual upload volumes, data leaving to weird destinations, compressed archives being created then transferred

Infrastructure changes—security controls being disabled, logging being stopped, backdoor accounts being created.

Automation where it counts

Not everything needs automated response. Some things should:

Known malware detected? Auto-isolate the host. No human needed.

Impossible travel detected—someone authenticated from New York then Tokyo 20 minutes later? Terminate sessions, force re-auth.

Security control disabled in cloud? Auto re-enable it and alert the security team.

High-severity vulnerability found in production? Auto-create ticket, notify owner, set deadline for remediation.

The human element

Best SIEM implementation I've seen: team of three analysts, mid-sized company, handling 50,000 events daily with low false positive rate. How?

They tuned aggressively. Every false positive was analyzed and rules were adjusted. Took six months but

eventually the signal-to-noise ratio was excellent.

They automated the boring stuff. Initial triage, evidence gathering, basic containment. Analysts spent time

hunting and investigating, not clicking through alerts.

They worked with development teams. When something triggered repeatedly, they'd sit with devs and figure out if it was a legitimate behavior or actually risky. Built trust and improved detection.

They measured what mattered. Not "alerts generated" but "threats detected and contained." Not "mean time to alert" but "mean time to containment."

Start here

If you're building SIEM from scratch-

Month 1: Get authentication logs centralized and build basic anomaly detection. This alone catches most account compromises.

Month 2: Add cloud control plane monitoring. Detect infrastructure changes that shouldn't happen.

Month 3: Baseline normal behavior and start flagging deviations. User behavior, network patterns, system activity.

Month 4: Add automated response for high-confidence detections. Build runbooks for everything else.

Month 5-6: Hunt through your data for things you're not detecting yet. Tune, expand, improve.

What not to do

Don't try to ingest everything on day one. Start small, prove value, expand.

Don't deploy vendor rules as-is. They don't know your environment. Tune for your reality.

Don't measure success by volume of alerts. Measure by threats caught and time to contain.

Don't treat SIEM as "fire and forget." It needs continuous tuning and improvement.

SIEM done right is a force multiplier. Done wrong, it's an expensive alert generator that demoralizes your team. The difference is starting with clear goals and continuously optimizing for signal over noise.

LATEST POSTS

Practical security engineering—what works, what doesn't, and why your SIEM is lying to you.

© RAJ PATHAK

View all posts +- Container Security

February 22, 2026

Kubernetes Security Reality Check

Everyone's running containers wrong—here's how to actually secure your K8s clusters.

- Cloud Security

February 6, 2026

Security Engineering in Practice

Real-world lessons from securing cloud infrastructure at scale—what works, what doesn't, and why.